The Great AI Puzzle: Unraveling the GPU Revolution

In the not-so-distant past, the Graphics Processing Unit (GPU) was a mere cog in the wheel of video gaming. It was a glorified pixel pusher, tasked with rendering lifelike graphics and immersive environments. However, as the demand for artificial intelligence (AI) surged, the humble GPU found itself thrust into the spotlight, transforming from the backbone of gaming into the powerhouse of deep learning and neural networks. This metamorphosis has not only reshaped the tech landscape but has also given rise to new players in the field, such as DeepSeek—a company that is redefining what it means to be efficient in AI training.

Imagine a world in which machines not only assist us but outsmart us, completing our thoughts before we even utter them. This isn’t just the realm of science fiction; it’s fast becoming our reality. In this book, we will delve into the intricate relationship between AI and GPUs, examining how companies like NVIDIA set the stage for this revolution, while newcomers like DeepSeek challenge the status quo. We will explore the technicalities of GPU architecture, the burgeoning importance of efficient computation, and the geopolitical factors at play in this high-stakes arena.

GPU 101: The Subtle Spark from Gaming to Deep Learning

In the grand tapestry of modern computing, the Graphics Processing Unit (GPU) is often overshadowed by its more illustrious counterpart—the Central Processing Unit (CPU). However, this perception is both outdated and misleading. While CPUs are the generalists of the computer world, handling a wide array of tasks, GPUs are the specialists, finely tuned for parallel processing, which has become indispensable in the realms of graphics rendering and, more importantly, artificial intelligence.

The story of GPUs begins in the late 1990s when graphics cards were primarily designed to accelerate 3D rendering for video games. Companies like NVIDIA and ATI (now part of AMD) pioneered the development of these chips, which leveraged parallel processing capabilities to handle multiple tasks simultaneously—tasks that would have taken a CPU much longer to compute. The ability to render complex graphics in real-time was a game-changer, but it was only the beginning.

As gaming technology advanced, so did the demands placed on GPUs. The introduction of programmable shaders allowed game developers to create more detailed and dynamic visuals, pushing GPUs to their limits. However, it wasn’t until the rise of deep learning that GPUs truly found their calling.

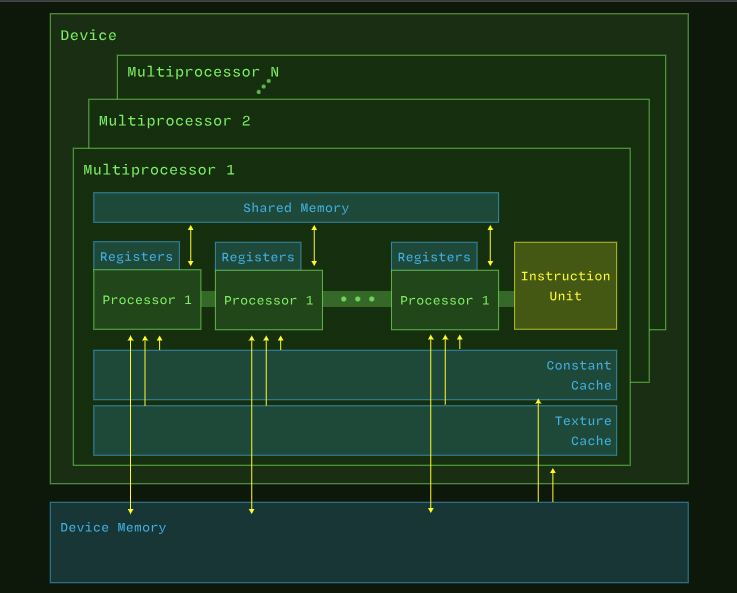

At the heart of a GPU’s architecture lies the principle of parallelism. Unlike CPUs, which might have a handful of cores optimized for sequential processing, GPUs boast thousands of smaller cores designed to perform simultaneous calculations. This design allows GPUs to tackle vast amounts of data at lightning speed—a vital attribute for the large-scale computations required in AI.

Consider the analogy of an assembly line: a CPU is like a skilled artisan, meticulously crafting each piece of a product one at a time. In contrast, a GPU functions like a well-oiled factory, with many workers operating in unison to produce multiple items simultaneously. This parallel processing ability is what gives GPUs their edge in handling the matrix operations that are fundamental to deep learning.

Another critical aspect of GPUs is their dedicated memory, known as VRAM (Video Random Access Memory). This local memory acts as a high-speed cache for data, significantly reducing the time it takes to fetch and process information. Imagine trying to cook late at night without any ingredients in your kitchen: every time you needed something, you’d have to drive to the store. Now, picture having a fully stocked pantry right next to your cooking station. This is essentially what VRAM does for GPUs, enabling them to operate more efficiently than CPUs, which often have to rely on slower main memory.

As artificial intelligence gained traction, particularly in the form of deep learning and neural networks, the demand for GPUs skyrocketed. No longer limited to rendering pixels, these powerful processors began recalibrating their focus to processing parameters—the weights and biases that constitute the intricate architectures of neural networks. This transition was not without its challenges, but it opened the floodgates for innovation.

Deep learning models, such as those powering image recognition and natural language processing, require enormous computational resources. The massive amounts of data processed through these models are often represented as matrices, and it is here that GPUs truly shine. Their architecture allows them to handle multiple matrix operations in parallel, drastically reducing training times compared to traditional CPU-based methods.

The convergence of AI and GPUs has sparked a technological renaissance. Companies are investing heavily in AI research and development, creating a burgeoning ecosystem where GPUs serve as the cornerstone of innovation. Frameworks such as TensorFlow and PyTorch have emerged, designed specifically to harness the power of GPUs for deep learning tasks. These tools allow researchers and developers to build sophisticated models with unprecedented ease and speed.

Moreover, the competitive landscape of AI has resulted in a race for efficiency. As we will explore in the subsequent chapters, companies like NVIDIA have responded to this demand by designing GPUs optimized for deep learning, while challengers like DeepSeek are reimagining how these resources can be utilized.

The journey from gaming to deep learning is a testament to the transformative power of technology. What started as a quest for better graphics has morphed into a new frontier in artificial intelligence—one where GPUs play an indispensable role. As we continue this exploration, we will uncover the intricate connections between AI, GPUs, and the companies that are driving this revolution forward. The stage is set; the actors are in place, and the plot thickens. Stay tuned for the next chapter as we delve deeper into why AI swoons for GPUs.

Why AI Swoons for GPUs

As we delve deeper into the relationship between artificial intelligence (AI) and Graphics Processing Units (GPUs), it becomes increasingly clear that this pairing is not merely functional; it is symbiotic. AI’s insatiable appetite for computation aligns perfectly with the capabilities of GPUs, creating a partnership that has the potential to redefine the landscape of technology as we know it. In this chapter, we will explore the reasons behind AI’s preference for GPUs, focusing on three critical aspects: the efficiency of matrix operations, the reduction in training time and costs, and the developer-friendly ecosystem that has emerged around these powerful processors.

At the core of deep learning lies the concept of matrices. Neural networks, which mimic the way the human brain processes information, rely heavily on matrix operations to learn from data. A neural network consists of layers of interconnected nodes, each representing a neuron. These nodes perform mathematical operations on input data, passing the results through activation functions to produce an output.

GPUs excel at handling these matrix operations due to their parallel processing architecture. While a CPU might struggle to perform thousands of operations sequentially, a GPU can tackle them all at once. This capability is akin to having a multilingual translator who can process multiple conversations simultaneously, rather than one at a time.

A simple example can illustrate this point: consider a neural network tasked with recognizing images. Each image can be represented as a matrix of pixel values, and each layer of the network performs transformations on these matrices. A GPU can compute these transformations in parallel, significantly speeding up the training process. As Andrew Ng, a prominent figure in AI, famously stated, “Deep learning is a bit like a black box; you give it data, and it gives you predictions. The more data you feed it, the smarter it gets.” GPUs are the engines that power this black box, allowing it to consume vast amounts of data efficiently.

In the fast-paced world of AI development, time is of the essence. The ability to train a model quickly can mean the difference between success and failure in a competitive landscape. GPUs offer a solution to this challenge, enabling faster training times and ultimately lowering costs for organizations.

For instance, consider a company developing a deep learning model for autonomous vehicles. The training process involves exposing the model to millions of images and scenarios to help it learn how to navigate the real world. By leveraging GPUs, the company can significantly reduce the time required for training—from weeks or even months to just days. This acceleration not only enhances productivity but also allows organizations to iterate and improve their models more frequently.

Moreover, the cost-effectiveness of GPUs cannot be overlooked. While the initial investment in GPUs can be substantial, the long-term savings gained from reduced training times and improved performance often outweigh the costs. As the demand for AI applications continues to rise, companies are increasingly recognizing the financial benefits of adopting GPU-based solutions.

One of the most compelling reasons AI has gravitated towards GPUs is the robust ecosystem of development tools and libraries that have emerged around them. Frameworks like TensorFlow, PyTorch, and Keras are designed with GPU optimization in mind, allowing developers to harness the power of these processors without needing to become experts in low-level programming.

These frameworks provide high-level abstractions that simplify the process of building and training neural networks. Developers can focus on designing their models rather than spending countless hours optimizing code for GPU performance. This ease of use has democratized AI, enabling a broader range of individuals and organizations to participate in the field.

Additionally, NVIDIA has played a pivotal role in nurturing this ecosystem through its CUDA (Compute Unified Device Architecture) platform. CUDA allows developers to write code that can run on NVIDIA GPUs, providing a bridge between high-level programming languages and the low-level operations required for efficient GPU utilization. As a result, developers can leverage the power of GPUs without needing to delve into the complexities of hardware-level optimizations.

To illustrate the harmony between AI and GPUs, consider the relationship between a virtuoso pianist and a grand piano. The pianist possesses immense talent, but without a quality instrument, that talent may never reach its full potential. Similarly, AI models have the capacity to learn and make predictions, but they require the computational power of GPUs to fully realize their capabilities.

This metaphor highlights the importance of choosing the right tools for the task at hand. Just as a skilled pianist selects a grand piano that resonates with their style, AI developers must select GPUs that align with their models’ requirements. The right combination can lead to extraordinary results, pushing the boundaries of what is possible in the realm of artificial intelligence.

The partnership between AI and GPUs is built on a foundation of efficiency, speed, and accessibility. As we have seen, GPUs excel in handling matrix operations, dramatically reducing training times and costs while providing developers with a rich ecosystem of tools. This synergy has fueled the rapid advancement of AI technologies, allowing them to tackle increasingly complex tasks.

In the next chapter, we will turn our attention to NVIDIA, the titan of the GPU industry, and explore how it has shaped the landscape of AI through its innovative hardware and strategic vision. As we continue our journey through the Great AI Puzzle, we will uncover the key players and their contributions to this exciting field.

NVIDIA: From Shading Pixels to Shaping AI

In the realm of GPUs, NVIDIA stands as a titan, a behemoth whose influence has permeated the very fabric of computing. Founded in 1993 by Jensen Huang, Chris Malachowsky, and Curtis Priem, NVIDIA initially carved its niche in the gaming industry, producing graphics cards that set new standards for visual fidelity. However, as the world shifted towards artificial intelligence, NVIDIA adeptly pivoted, transforming itself into a cornerstone of the AI revolution. In this chapter, we will explore NVIDIA’s journey, the innovations that propelled its rise, and the strategic maneuvers that established it as the go-to provider for AI hardware.

NVIDIA’s ascent began with its groundbreaking RIVA series of graphics cards, which laid the foundation for its dominance in the gaming sector. The company’s innovations in 3D graphics rendering, particularly the introduction of the GeForce 256 (marketed as the world’s first GPU), revolutionized video gaming and set the stage for future advancements. This early success was instrumental in building NVIDIA’s brand, but it was the advent of deep learning that catapulted the company into a new era.

As AI began to capture the public’s imagination, researchers soon realized that the computational demands of deep learning were far greater than those of traditional computing tasks. The realization that GPUs could handle the parallel processing required for neural networks led to an influx of interest in NVIDIA’s hardware. Researchers and developers quickly discovered that these powerful processors could significantly accelerate training times, positioning NVIDIA as the leader in the AI hardware market.

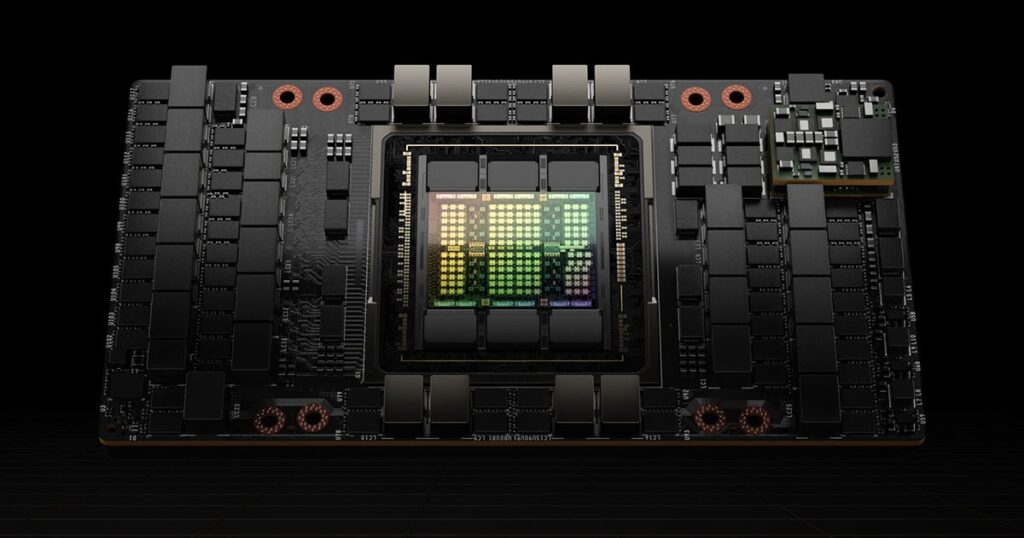

One of the defining moments in NVIDIA’s evolution was the introduction of Tensor Cores—specialized hardware designed specifically for deep learning operations. First unveiled in the Volta architecture, Tensor Cores were engineered to perform mixed-precision matrix multiplications at unprecedented speeds. This innovation was a game-changer for AI researchers, allowing them to train larger models faster and more efficiently.

Tensor Cores optimize the use of both FP16 (half precision) and FP32 (single precision) data types, effectively doubling the throughput of matrix operations. This capability is particularly crucial in deep learning, where the training of models often requires an intricate dance of calculations involving vast matrices. With the introduction of Tensor Cores, NVIDIA positioned itself at the forefront of AI hardware, providing researchers with the tools they needed to push the boundaries of what was possible in machine learning.

As AI pioneer Yann LeCun aptly stated, “The future is about learning from data, and the tools we have today are not sufficient. We need faster hardware and better algorithms.” NVIDIA’s Tensor Cores exemplify this sentiment, embodying the marriage of cutting-edge hardware and advanced algorithms that fuel AI’s rapid advancement.

While NVIDIA’s hardware innovations are undoubtedly impressive, the company’s success is also attributed to its strategic foresight in creating a comprehensive software ecosystem. The CUDA platform, launched in 2006, allowed developers to leverage the power of NVIDIA GPUs through a user-friendly programming model. CUDA opened the floodgates for GPU programming, enabling a wide range of applications beyond gaming, including scientific computing, simulations, and, crucially, deep learning.

In addition to CUDA, NVIDIA has invested heavily in developing libraries and frameworks tailored for AI research. Libraries such as cuDNN (CUDA Deep Neural Network) provide highly optimized implementations of common deep learning operations, significantly improving performance for neural networks. By integrating these tools into popular frameworks like TensorFlow and PyTorch, NVIDIA has positioned itself as an indispensable partner for AI developers.

The company’s acquisition of Mellanox Technologies in 2020 further solidified its strategy, enhancing its capabilities in high-performance networking and data centers. This move was a clear signal of NVIDIA’s commitment to supporting the growing demand for AI in enterprise environments, where efficient data transfer and processing are paramount.

NVIDIA’s meteoric rise in the AI space has not come without challenges, particularly in navigating the complexities of supply and demand. The global appetite for GPUs has surged, driven by the explosion of AI applications across industries, from healthcare to finance. This unprecedented demand has led to supply constraints, creating a competitive landscape where companies vie for access to NVIDIA’s cutting-edge technology.

Amidst these challenges, NVIDIA has maintained its market mojo by continuously innovating and expanding its product lineup. The introduction of the A100 Tensor Core GPU marked a significant leap forward, offering unprecedented performance for AI training and inference tasks. As the demand for AI capabilities continues to grow, NVIDIA’s commitment to research and development ensures that it remains at the forefront of the industry.

Beyond hardware and software, NVIDIA has cultivated a vibrant community of developers, researchers, and enthusiasts through initiatives like the NVIDIA Developer Program and the GPU Technology Conference (GTC). These platforms provide opportunities for knowledge sharing, collaboration, and networking, fostering an environment where innovation can thrive.

NVIDIA’s dedication to education and outreach is evident in its efforts to support AI research and development at academic institutions and startups. The company’s sponsorship of research grants and partnerships with universities has helped to nurture the next generation of AI talent. By investing in education, NVIDIA is not only ensuring its own future success but also contributing to the broader advancement of the field.

NVIDIA’s transformation from a graphics card manufacturer to a powerhouse in AI hardware is a testament to its ability to adapt and innovate in a rapidly changing landscape. Through groundbreaking technologies like Tensor Cores, a robust software ecosystem, and strategic market maneuvers, NVIDIA has solidified its position as the leader in the AI revolution.

As we move forward in this exploration of the Great AI Puzzle, we will turn our attention to DeepSeek, a rising star in the AI arena that is challenging NVIDIA’s dominance. The emergence of DeepSeek highlights the dynamic nature of the industry and the ever-evolving competition for efficiency and performance in AI training. Join us as we uncover the story behind this intriguing new player and its potential impact on the future of artificial intelligence.

DeepSeek Emerges: A Challenger in the AI Arena

As the landscape of artificial intelligence continues to evolve, a new player has emerged from the shadows, challenging the established giants of the industry. Enter DeepSeek, a Chinese startup that has quickly garnered attention for its innovative approaches to AI training and efficiency. With a reported arsenal of GPUs and a commitment to pushing the envelope of what is possible in artificial intelligence, DeepSeek has become a formidable contender in a space dominated by the likes of NVIDIA. In this chapter, we will explore DeepSeek’s origins, its unique strategies, and the implications of its rise for the future of AI.

Founded in the bustling tech hub of Shenzhen, DeepSeek was born out of a vision to create cutting-edge AI solutions that could rival those produced by established players. While the company may not boast the same pedigree as NVIDIA or Google, it has quickly made a name for itself through sheer ingenuity and a focus on high-efficiency training methods. The founders of DeepSeek recognized early on that in order to compete, they needed to think outside the box—leveraging fewer resources to achieve remarkable results.

As the AI arms race intensified globally, especially in China, DeepSeek’s founders set their sights on optimizing GPU usage in ways that had not been widely explored. Their approach was not just about acquiring more GPUs; it was about maximizing the performance of the GPUs they had. This philosophy of efficiency and innovation would soon pay off, positioning DeepSeek as a compelling rival in the AI arena.

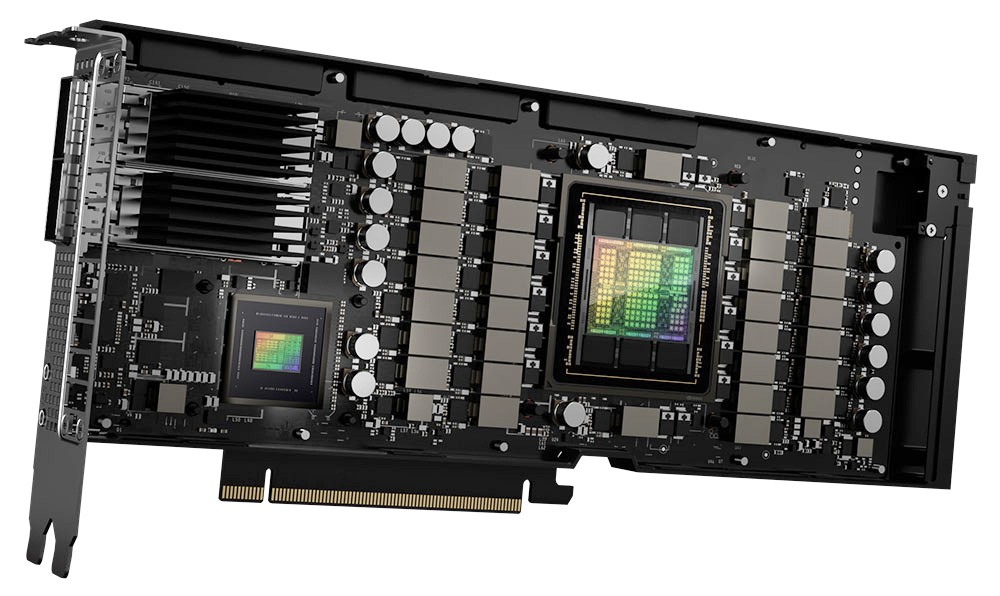

In contrast to the expansive GPU farms of giants like OpenAI and NVIDIA, which boast tens of thousands of GPUs, DeepSeek has reportedly achieved remarkable performance with a much smaller arsenal—rumored to be around 50,000 NVIDIA H100 GPUs. This disparity raises eyebrows and prompts questions: How can a startup with fewer resources compete with well-funded, resource-rich titans?

The answer lies in DeepSeek’s innovative engineering and strategic focus. By concentrating on optimizing their existing hardware and leveraging advanced software techniques, DeepSeek has managed to achieve performance levels that rival those of larger competitors. This situation can be likened to the biblical tale of David and Goliath, where ingenuity and precision can triumph over brute strength.

DeepSeek’s performance paradox is rooted in its commitment to optimization. While many AI labs have approached their projects with an abundance mindset—prioritizing resource acquisition—DeepSeek has adopted a mindset of necessity-driven innovation. Following the implementation of export restrictions on advanced GPUs, which limited their access to the latest technologies, the team was forced to think creatively.

This necessity inspired a deeper exploration of how to fine-tune their training processes. One of the key strategies employed by DeepSeek has been the development of advanced algorithms that allow them to extract maximum performance from their existing hardware. By focusing on optimizing training routines, minimizing data transfer times, and refining their model architectures, DeepSeek has managed to achieve efficiency levels that many thought impossible.

In the realm of AI, data is often referred to as the new oil—a valuable resource that fuels innovation and drives advancements. DeepSeek’s approach to data curation and utilization has allowed it to extract insights and training information that traditional methods often overlook. The company has invested heavily in gathering diverse datasets, ensuring that its models are not only robust but also capable of generalizing to new environments and tasks.

This focus on data quality over quantity has led to impressive results. DeepSeek’s models are designed to learn effectively from fewer examples, minimizing the risk of overfitting while maximizing generalization capabilities. As a result, the company is able to produce high-performance AI solutions with far less data than many of its competitors.

DeepSeek’s emergence signifies a pivotal shift in the AI ecosystem. As the company continues to push the boundaries of efficiency, it challenges the prevailing notion that more hardware equates to better performance. This disruption has the potential to reshape the industry, prompting other companies to reevaluate their resource allocation and training strategies.

Investors and industry experts are taking notice of DeepSeek’s impressive trajectory. Some are optimistic about the company’s potential to democratize access to AI by providing high-quality models without the exorbitant costs typically associated with large-scale GPU deployments. Others, however, are wary—wondering if DeepSeek’s approach could threaten the traditional GPU market and the business models of established players.

As we look to the future, the rise of DeepSeek raises important questions about the direction of AI development. Will efficiency become the new gold standard for success, overshadowing the traditional metrics of resource acquisition? How will established players respond to this new competitive landscape?

What is clear is that the AI arms race is far from over. With the emergence of challengers like DeepSeek, the dynamics of the industry are shifting, creating both opportunities and challenges. The interplay between established giants and nimble startups will define the next chapter of artificial intelligence, pushing the boundaries of what is possible.

DeepSeek’s ascent in the AI arena signifies a remarkable shift in the industry, showcasing the power of innovation and efficiency over sheer resource availability. As the company continues to develop high-performance AI solutions with fewer GPUs, it challenges established paradigms and invites us to rethink our approaches to AI training.

In the next chapter, we will delve into PTX, DeepSeek’s hidden weapon, which has been instrumental in its optimization efforts. By examining this technology, we can better understand how DeepSeek is redefining GPU performance and what that means for the future of AI development.

PTX: DeepSeek’s Hidden Weapon

In the competitive arena of artificial intelligence, where every millisecond counts and efficiency is paramount, one term has begun to echo through the corridors of innovation: PTX, or Parallel Thread Execution. While most of the AI community rallies around NVIDIA’s widely recognized CUDA platform, DeepSeek has quietly harnessed the power of PTX to optimize its GPU operations in ways that few have dared to explore. This chapter will delve into the intricacies of PTX, its advantages over traditional methods, and the profound implications it holds for the future of GPU computing and AI.

PTX serves as an intermediate representation between high-level programming languages and the low-level instructions that GPUs understand. This architectural layer allows software developers to write code that can be compiled into machine-level instructions tailored for NVIDIA GPUs. PTX provides an abstraction that simplifies the development process while maintaining the flexibility and control needed for optimizing performance.

To visualize this, think of PTX as a skilled interpreter who translates complex ideas into multiple languages. While the original message may be rich and nuanced, the interpreter ensures that the meaning is preserved across different languages. In the context of GPU programming, PTX enables developers to craft sophisticated algorithms while retaining the ability to optimize them for specific hardware configurations.

One of the most significant advantages of PTX lies in its ability to provide developers with granular control over GPU resources. With PTX, DeepSeek can fine-tune their operations at a level that standard CUDA implementations may not allow. This capability is akin to a maestro conducting a symphony, where every note, instrument, and tempo can be adjusted to create a harmonious arrangement.

By venturing beyond the friendly confines of CUDA, DeepSeek has tapped into the full potential of their GPU architecture. They can optimize thread management, allocate registers more efficiently, and manage memory bandwidth in ways that yield significant performance gains. This meticulous attention to detail allows DeepSeek’s engineers to push the limits of what their hardware can achieve, resulting in faster training times and enhanced model performance.

The implications of DeepSeek’s focus on PTX extend far beyond the immediate performance gains of their own models. If their techniques prove successful, they could redefine how GPU performance is harnessed across the industry. As more companies begin to recognize the value of PTX, there is the potential for a broader shift in programming practices, leading to a more nuanced approach to GPU utilization.

This paradigm shift could challenge the status quo, pushing other AI companies to explore similar optimization strategies. In an industry that has long favored brute-force approaches—accumulating more GPUs to achieve better results—DeepSeek’s success with PTX may inspire a reevaluation of what constitutes effective resource management.

To better understand the practical applications of PTX, let’s explore how DeepSeek has integrated this technology into their flagship models. Take DeepSeek-V3, for example, which boasts a staggering 175 billion parameters while requiring only 2,000 GPUs to operate—compared to the 10,000 GPUs that models like ChatGPT demand. This remarkable feat is made possible through the efficient use of PTX, allowing DeepSeek to streamline training processes and maximize hardware capabilities.

In the realm of natural language processing, DeepSeek has implemented PTX to enhance the model’s ability to understand context and nuance. By optimizing how data flows through the network—ensuring that memory accesses are efficient and computations are parallelized—DeepSeek has improved the speed and accuracy of its language models. This focus on optimization enables the model to generate coherent responses with fewer errors, effectively proofreading its own outputs before presenting them.

As the AI landscape continues to evolve, the role of PTX in GPU programming may become increasingly prominent. If DeepSeek can successfully demonstrate the tangible benefits of its PTX-driven optimizations, other organizations may feel compelled to adopt similar strategies to keep pace with rapidly advancing technologies.

The implications of this shift extend beyond performance improvements; they may also influence the design of future GPUs themselves. If the industry begins to prioritize flexibility and customization through PTX, we could see a new generation of GPUs optimized for AI workloads, complete with built-in support for advanced programming techniques.

DeepSeek’s adept use of PTX illustrates the power of innovation and optimization in the realm of artificial intelligence. By leveraging this hidden weapon, the company has carved out a unique niche for itself, challenging established norms and pushing the boundaries of what is possible with GPU technology.

As we move forward in our exploration of the Great AI Puzzle, we will take a closer look at how DeepSeek’s efficiency-driven strategies are redefining expectations within the industry. In the next chapter, we will uncover the groundbreaking advancements that DeepSeek has achieved with its models, showcasing how they do more with less and what that means for the future of AI.

Efficiency Redefined: How DeepSeek Does More with Less

In the world of artificial intelligence, efficiency is not just a buzzword—it’s a necessity. As the field evolves, organizations are increasingly pressed to achieve remarkable results using fewer resources. DeepSeek has emerged as a trailblazer in this regard, demonstrating that it is possible to build powerful AI models with significantly less computational overhead compared to industry giants. In this chapter, we will explore the innovative strategies and methodologies that have allowed DeepSeek to redefine efficiency in AI training, showcasing flagship models like DeepSeek-V3 and DeepSeek-R1.

DeepSeek-V3 has quickly established itself as a hallmark of efficiency in the AI landscape. With 175 billion parameters, it stands as a testament to the company’s ability to deliver state-of-the-art performance while utilizing just 2,000 NVIDIA H100 GPUs. In contrast, models such as OpenAI’s ChatGPT require up to 10,000 GPUs to achieve similar results. This stark difference raises a pivotal question: how has DeepSeek managed to achieve such remarkable performance with a fraction of the resources?

At the core of DeepSeek-V3’s efficiency is a meticulous approach to model architecture and training processes. The team at DeepSeek has invested considerable effort into optimizing the model’s design, ensuring that each parameter is purposefully placed and contributes meaningfully to the model’s overall performance. This optimization extends to the training algorithms employed, where advanced techniques such as mixed-precision training and dynamic learning rates are utilized to enhance both speed and accuracy.

Moreover, DeepSeek has implemented innovative parallel processing techniques that allow the model to distribute workloads across its GPU resources more effectively. By optimizing how tasks are scheduled and executed, DeepSeek minimizes idle time and maximizes throughput—a crucial factor in achieving high performance with fewer GPUs.

Another groundbreaking model developed by DeepSeek is DeepSeek-R1, which employs a novel approach to reasoning called the “chain of thought.” This model is designed to self-check its own reasoning processes, essentially proofreading its conclusions before presenting them. This capability not only enhances the reliability of its outputs but also reduces the need for extensive fine-tuning after training.

The chain of thought mechanism relies on a structured approach to problem-solving, where the AI breaks down complex tasks into smaller, manageable components. By verifying its reasoning at each step, DeepSeek-R1 can identify and correct potential errors in real-time, leading to more accurate and coherent responses. This self-checking process is akin to a student reviewing their work before submission, ensuring that the final output is polished and precise.

DeepSeek’s focus on efficiency and innovative training techniques has significant implications for the AI industry at large. If a smaller number of GPUs can achieve similar or even superior performance compared to traditional models, it may prompt other organizations to rethink their resource allocation strategies. This shift could lead to a more sustainable approach to AI development, reducing the environmental impact associated with the massive energy consumption of large-scale GPU farms.

As DeepSeek continues to showcase its efficiency-driven methodologies, it may also influence market dynamics. Investors and stakeholders may begin to favor companies that prioritize optimization over sheer scale. This could result in increased funding and support for startups that embrace innovative approaches to AI, fostering a culture of ingenuity and creativity within the industry.

The implications of DeepSeek’s efficiency extend beyond the realm of AI models. The principles of resource optimization and innovative training strategies can be applied across various domains, from healthcare to finance, where the demand for rapid and reliable AI solutions is ever-increasing. By leveraging DeepSeek’s methodologies, organizations in diverse sectors can achieve their goals with fewer resources, creating a ripple effect that enhances productivity and innovation.

For instance, in the healthcare sector, AI models are increasingly used for diagnostic purposes, predicting patient outcomes, and personalizing treatment plans. By adopting DeepSeek’s efficiency-driven strategies, healthcare organizations can deploy powerful AI solutions without incurring the prohibitive costs associated with extensive GPU infrastructure. This democratization of AI could lead to improved patient care and outcomes across the globe.

As we look towards the future, the rise of DeepSeek and its emphasis on efficiency signals a new era in the AI landscape. Established players will undoubtedly feel the pressure to evolve and adapt, seeking innovative ways to optimize their own models and reduce resource consumption. The traditional metrics of success—larger models and more GPUs—may give way to a new paradigm focused on intelligent resource management and performance optimization.

This shift will not only impact the way AI models are developed but may also reshape the competitive dynamics within the industry. As efficiency becomes the gold standard, new entrants like DeepSeek could challenge the dominance of established giants, fostering a more equitable and innovative AI ecosystem.

DeepSeek’s commitment to efficiency has redefined what is possible in AI training, demonstrating that it is possible to achieve remarkable results with fewer resources. Through the development of models like DeepSeek-V3 and DeepSeek-R1, the company has set a new benchmark for performance in the industry.

As we continue our exploration of the Great AI Puzzle, we will turn our attention to the global landscape of AI and the geopolitical factors that influence its development. In the next chapter, we will analyze the implications of export limits and the innovative responses from companies like DeepSeek as they navigate the complexities of the international AI arena.

The Global AI Chessboard

As the race for artificial intelligence accelerates, the geopolitical landscape surrounding AI development becomes increasingly complex. Countries are vying for technological supremacy, recognizing that AI capabilities will shape future economies, national security, and global influence. In this chapter, we will explore the key geopolitical factors that are influencing the development of AI, examining how countries respond to competition, the implications of export limits, and the strategies employed by companies like DeepSeek to navigate this evolving landscape.

The rise of AI has transformed it into a strategic asset that nations are eager to harness. Countries like the United States and China are leading the charge, investing heavily in research, talent acquisition, and infrastructure to secure their positions at the forefront of AI technology. This technological arms race is reminiscent of the space race of the mid-20th century, where innovation in one country could tip the balance of power in its favor.

For instance, China has made significant strides in AI development, with the Chinese government pledging substantial investments to foster homegrown AI technologies. Key initiatives like the “Next Generation Artificial Intelligence Development Plan,” launched in 2017, aim to position China as a global leader in AI by 2030. This ambitious plan encompasses a wide array of sectors, including healthcare, transportation, and national defense, with the goal of integrating AI into the fabric of society.

Conversely, the United States has responded with its own strategic initiatives, emphasizing the importance of maintaining a competitive edge in AI. The National AI Initiative Act of 2020 aims to enhance research and development in AI, promote workforce training, and ensure the ethical use of AI technologies. The U.S. also leverages its existing tech ecosystem, home to Silicon Valley giants like Google, Microsoft, and NVIDIA, to foster innovation and attract top talent from around the world.

As countries race to secure their positions in the AI landscape, geopolitical tensions have led to increased scrutiny over technology exports. The U.S. government, for instance, has implemented export controls on advanced semiconductor technologies and GPUs, aiming to restrict access to powerful AI hardware for countries deemed a threat to national security, particularly China. These restrictions have a profound impact on the global supply chain and the ability of AI companies to operate effectively.

For companies like DeepSeek, navigating these export limits presents both challenges and opportunities. With limited access to cutting-edge technologies, DeepSeek has been forced to innovate and optimize its existing resources. By focusing on efficiency and leveraging PTX, the company has been able to maintain a competitive edge, even in the face of supply chain constraints.

Moreover, these geopolitical tensions have prompted a search for alternative suppliers and partnerships. As companies reassess their reliance on specific regions for advanced technologies, we may see a diversification of the global supply chain, with emerging markets and startups gaining prominence in the AI ecosystem.

In the face of geopolitical challenges, collaboration has emerged as a key strategy for navigating the complex landscape of AI development. Countries and companies are increasingly recognizing that partnership can lead to shared knowledge, resources, and capabilities. Collaborative initiatives, such as research consortia and joint ventures, are becoming more common, allowing stakeholders to pool their expertise and accelerate innovation.

DeepSeek, for example, has actively sought partnerships with academic institutions and research organizations to enhance its capabilities. By collaborating with experts in various fields, the company can access cutting-edge research, gain insights into emerging technologies, and bolster its talent pool. This collaborative spirit not only strengthens DeepSeek’s position but also contributes to the broader advancement of AI.

As nations jockey for position in the AI race, ethical considerations surrounding AI development are becoming increasingly important. Issues such as data privacy, algorithmic bias, and the potential for job displacement are gaining attention from policymakers, researchers, and the public alike. Countries must grapple with the ethical implications of their AI strategies, ensuring that advancements in technology do not come at the expense of societal well-being.

DeepSeek is acutely aware of the ethical dimension of its work. The company has made a commitment to responsible AI development, focusing on transparency, accountability, and fairness in its algorithms. By prioritizing ethical considerations, DeepSeek not only strengthens its reputation but also sets an example for others in the industry. In a world where trust in AI technologies is paramount, organizations that prioritize ethical practices will likely gain a competitive advantage.

The global AI chessboard is characterized by intense competition, strategic collaborations, and ethical considerations. As countries engage in a high-stakes race for technological supremacy, companies like DeepSeek are redefining what it means to be competitive in the AI landscape. By focusing on optimization, collaboration, and ethical practices, DeepSeek is carving a unique path forward in an increasingly complex environment.

As we continue our journey through the Great AI Puzzle, we will explore the final chapter, where we will reflect on the future of GPUs, the evolving landscape of AI, and the promise that lies ahead for both established players and new entrants in this dynamic field.

The Future of GPUs and AI: A New Horizon

As we stand at the intersection of technology and innovation, the future of Graphics Processing Units (GPUs) and artificial intelligence (AI) is teeming with potential. The rapid advancements we have witnessed over the past decade are merely the prologue to a much larger narrative that is still unfolding. This chapter will explore the anticipated developments in GPU technology, the evolving landscape of AI applications, and the possibilities that lie ahead for both established industry giants and emerging innovators like DeepSeek.

The evolution of GPUs has been marked by a relentless pursuit of performance, efficiency, and versatility. As AI continues to permeate various sectors, the next generation of GPUs will be designed with a keen focus on addressing the unique challenges posed by machine learning and deep learning tasks. We are likely to see several key trends as manufacturers innovate to meet the growing demands of AI.

- Specialized Architectures: Just as Tensor Cores revolutionized GPU performance for AI workloads, future GPUs will likely feature increasingly specialized architectures tailored for specific tasks. These architectures may integrate AI accelerators, optical interconnects, and advanced memory systems designed to enhance computational efficiency for deep learning models.

- Increased Energy Efficiency: As environmental concerns mount and energy consumption becomes a pressing issue, GPU manufacturers will prioritize energy efficiency. Innovations in semiconductor technology, such as the transition to 3nm or smaller processes, will enable GPUs to deliver more performance per watt, thereby reducing their carbon footprint.

- Enhanced Parallelism: The design of GPUs will continue to evolve to support even greater levels of parallelism. Future models may incorporate advanced techniques such as dynamic workload distribution and adaptive resource allocation, allowing them to handle a wider range of tasks simultaneously. This shift could significantly enhance the performance of machine learning tasks that require extensive data processing.

The future of AI is not limited to just one or two sectors; its applications are boundless and will continue to expand into new domains. As we look ahead, several key areas are poised for transformative change through the integration of AI technologies.

- Healthcare Revolution: AI has already begun to reshape healthcare, and its impact will only deepen. From predictive analytics that anticipate patient outcomes to personalized treatment recommendations driven by vast datasets, AI will enable healthcare providers to deliver more effective and timely care. GPU advancements will be critical in processing medical images, genomics, and electronic health records to identify patterns that can inform treatment decisions.

- Autonomous Systems: The race toward fully autonomous vehicles is well underway, and AI will play a pivotal role in making this vision a reality. As the technology matures, we can expect to see significant improvements in safety, efficiency, and navigation capabilities. GPUs will be instrumental in processing the immense amounts of data generated by sensors and cameras, enabling real-time decision-making in complex environments.

- Creative AI: The intersection of AI and creativity is an exciting frontier where machines are beginning to assist—or even collaborate—with human creators. From generating art and composing music to writing stories, AI has the potential to redefine creative processes. The enhanced computational capabilities of future GPUs will allow for more sophisticated generative models that can produce high-quality outputs in real-time.

As the GPU and AI landscapes evolve, emerging players like DeepSeek will continue to challenge the status quo. Their commitment to efficiency, optimization, and innovative approaches will enable them to carve out niches in a competitive market. The success of companies like DeepSeek may inspire a wave of new startups focused on specialized AI solutions, fostering an environment of creativity and disruption.

Moreover, the rise of open-source AI frameworks and collaborative platforms will empower developers and researchers worldwide. This democratization of access to cutting-edge technologies will lead to a more diverse range of voices and ideas contributing to the AI discourse. As more individuals and organizations engage with AI, we can expect to see novel applications and solutions that we have yet to imagine.

As AI technologies become more integrated into our daily lives, the ethical implications of their use will remain at the forefront of discussions. It is imperative that stakeholders prioritize responsible AI development, ensuring that biases are mitigated, data privacy is protected, and transparency is maintained. The future of AI must be built on a foundation of ethical principles, fostering trust and accountability.

Companies like DeepSeek are already setting an example by embedding ethical considerations into their development processes. By promoting fairness, transparency, and inclusivity, they are demonstrating that it is possible to innovate while also being socially responsible. This commitment will resonate with consumers, investors, and regulatory bodies alike, influencing the trajectory of the AI industry.

As we gaze into the future of GPUs and artificial intelligence, we find ourselves on the brink of transformative change. The convergence of specialized GPU architectures, diverse AI applications, and emerging innovators like DeepSeek is poised to redefine our understanding of what is possible. The journey ahead will be marked by challenges and opportunities, requiring collaboration, innovation, and ethical considerations to navigate the complexities of this rapidly evolving landscape.

In the grand puzzle of AI, every piece—the technology, the players, the applications, and the ethical frameworks—interconnects to form a cohesive whole. As we move forward, the promise of AI lies not only in its capabilities but in our collective responsibility to shape its future for the betterment of society.

Epilogue: The Ongoing Journey of AI and GPU Innovation

As we conclude our exploration of the Great AI Puzzle, it is essential to reflect on the themes and insights that have emerged throughout this journey. The landscape of artificial intelligence, underpinned by the evolution of GPU technology, is not just a series of advancements; it is a dynamic ecosystem shaped by innovation, competition, collaboration, and ethical considerations. This epilogue serves as a reminder that while we have uncovered many facets of this journey, the story of AI and GPU innovation is far from complete.

The narrative of AI is continually unfolding, driven by the convergence of technological advancements, societal needs, and the aspirations of individuals and organizations alike. As we have seen, companies like NVIDIA and DeepSeek have played significant roles in shaping this journey, each contributing unique perspectives and innovations. Their stories exemplify the interplay between established giants and nimble startups, highlighting the importance of adaptability and foresight in a fast-paced industry.

The competition between these entities has sparked a wave of creativity and ingenuity, pushing the boundaries of what is possible. This competitive landscape not only fuels technological advancements but also inspires collaboration among researchers, developers, and practitioners. The rise of open-source platforms, shared knowledge, and collective efforts to address common challenges signifies a shift toward a more inclusive and collaborative AI ecosystem.

As we venture further into the realm of AI, the ethical implications of our choices become increasingly critical. The potential for AI to impact every facet of society—from healthcare and education to finance and entertainment—necessitates a commitment to responsible development. The lessons learned from past experiences, particularly concerning bias, privacy, and accountability, must inform our approach as we design and deploy AI technologies.

Companies like DeepSeek, with their focus on ethical practices, serve as a beacon for others in the industry. Their commitment to transparency and fairness reflects a growing awareness that technological prowess must be accompanied by a sense of social responsibility. As stakeholders—be they developers, policymakers, or consumers—we must collectively advocate for ethical AI practices that prioritize human values and societal well-being.

The journey of AI and GPU innovation is not solely the province of technologists and industry leaders; it is a shared endeavor that requires the engagement of all members of society. As we move forward, we must remain curious, informed, and proactive in our interactions with AI technologies. Each of us has a role to play in shaping the future of AI, whether as advocates for ethical practices, contributors to open-source communities, or informed consumers of AI products.

Engagement in discussions about AI governance, regulations, and the ethical implications of technology is vital. By participating in these conversations, we can help ensure that the trajectory of AI aligns with our collective aspirations for a better future. The promise of AI is not just in its capabilities but in the potential for it to enhance human experiences, drive innovation, and address pressing global challenges.

As we look to the horizon, the future of AI and GPU technology is poised to be characterized by rapid advancements, unforeseen challenges, and exciting opportunities. The evolution of AI will continue to reflect the complexities of our society, adapting to meet our ever-changing needs and aspirations.

In closing, the exploration of the Great AI Puzzle has illuminated the multifaceted nature of artificial intelligence and the vital role of GPU technology in driving its progress. As we navigate this uncharted territory, let us embrace the spirit of inquiry, collaboration, and ethical responsibility that defines this journey.

The story of AI is ongoing, and its future is unwritten. Together, we have the power to influence its trajectory, shaping a world where technology serves humanity and enriches our collective experience. Let us move forward with optimism, curiosity, and a commitment to building a better future through the responsible and innovative application of artificial intelligence.